What is cognitive psychology

Cognitive psychology studies and analyzes our mental processes: how we make decisions in situations of uncertainty, how our memory and attention work. The emergence of this field was prompted by various events, including the development of linguistics and, in particular, the invention of the computer, which allowed people to create software capable of solving tasks that were once fully in the domain of humans.

Conducting the development of related software were the scientists Allen Newell and Herbert A. Simon. Together, they created one of the earliest artificial intelligence programs: Logic Theory Machine (1956) and General Problem Solver (1957). In 1975, Newell and Simon received a Turing Award for their contribution to artificial intelligence, the psychology of human cognition, and list processing.

In their work, they drew from the problem-space theory, where a problem is understood as a certain domain. To find a solution, you need to go from the initial state, when the task is set, to the final target, when the task is solved. Both a human and a computer are able to handle this kind of operations.

In the problem-space theory, two types of operations can be solved: algorithms and heuristics. Algorithms consider all possible hypotheses, which ideally inevitably leads to the right solution. In life, people rarely use this type, as it takes quite a lot of time. That’s why we use heuristics, a problem-solving method that uses rules of thumb rather than a strict formula, but most often provides a quick and workable approximate solution.

Heuristics allow you to go over some solution options in advance and choose others if the former didn’t prove suitable, which doesn’t guarantee a 100% success but significantly saves you effort and time on your way to achieving it. For example, you’re deciding how to go home from work and come up with a number of different options: walk to a metro station, take a bus to the metro station, or take the metro right away. Then you consider potential traffic jams and weather. You make the final decision based on this additional information about traffic jams and weather. Most likely, you will be right in your choice, but as already mentioned, there are no 100% guarantees.

When heuristics go bad

We couldn’t function without heuristics, but some of them are pretty strange and can lead us astray. For example, take the classic brain teaser about the wolf, goat, and cabbage. A man has to take a wolf, a goat, and some cabbage across a river. His boat is too small, so he can take one thing at a time. If he takes the cabbage with him, the wolf will eat the goat. If he takes the wolf, the goat will eat the cabbage. The only solution is to go back every time, but our brain doesn’t like going back. It’s very hard for us to break this taboo of going back.

Another focuses on minimizing differences: this is the so-called hill-climbing heuristic. It prescribes that in the case of uncertainty, we make a decision that dramatically leads to the desired result. Suppose we are walking through a forest, decide to climb to the top of a hill and see several paths to achieve that. Most likely, we will choose the one that goes steeply up, although this may well end up as a bad choice. Such heuristics are strongly tied to human emotions and the individual experience of a person.

There are other heuristics that often lead to the wrong decision. One example is the heuristic of accessibility: we consider a correct answer to be the one that is based on information we already know. Another is the heuristic of analogy, as per which a person attempts to handle a situation as they handled a similar situation before. Finally, there is the heuristic of representativeness: our brain incessantly tries to find a pattern in everything.

Two systems of thinking

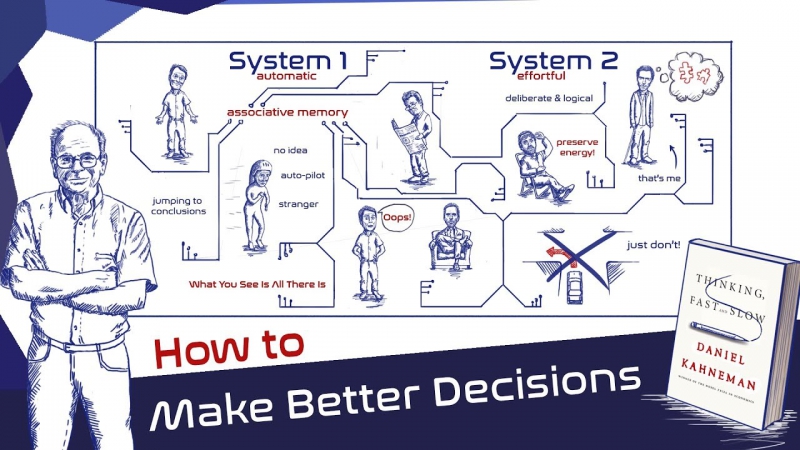

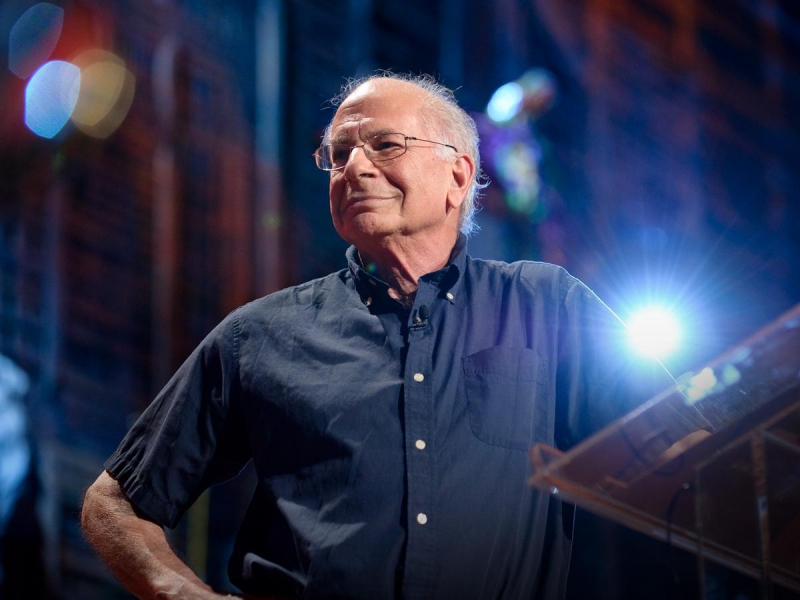

The topic of heuristics is the focus of Daniel Kahneman, a prominent cognitive psychologist who has been studying the decision-making process under the conditions of uncertainty. He has mainly dealt with issues related to money: how irrationally we treat money and how we can evaluate the same amount of money in completely different ways. For example, we can be willing to pay more than we planned for a computer we like, but this isn’t the case with smaller items, for example, a flash drive, even if the difference between both cases amounts to a thousand rubles. Kahneman and his colleague Amos Tversky received the Nobel Prize in Economics for their groundbreaking work in this field.

Kahneman and Tversky, like many other researchers, believed that all systems are engaged in the thinking process. One of them, intuition, acts quickly and automatically but learns slowly. Another one, logic, works slowly and requires a lot of effort, but at the same time acts flexibly. We use it when faced with something new, uncertain or strange, as well as when we feel that the first system, intuition, needs verification.

Kahneman wrote several problems that prove the existence of the two systems of thinking. For example, a set made up of a bat and a ball costs 110 rubles, with the bat costing 100 rubles more than the ball. The question that stands is, how much does the ball cost? If you calculate mathematically and switch on your logic, you get 5 and 105. But all too often, people are led to the wrong answer by the numbers themselves, which just make you want to answer “10”. Switching to a new system can be difficult.

There is also a framing effect, a cognitive bias where people decide on options based on how the task is defined. This can be illustrated by the following task: a disease appears that threatens to kill 600 people. Two vaccines have been developed to fight it: one can save 200 people, and the other – only a third, and the rest will die. Many people will choose the first option because it has the exact numbers, even though in essence, they are both the same.

Further reading

There are two especially good books on the subject of thinking. Daniel Kahneman’s oeuvre Thinking, Fast and Slow makes for a great introduction to the topic, and it makes you think, too. Another one is Judgment Under Uncertainty: Heuristics and Biases, written by Kahneman together with Paul Slovic and Amos Tversky, is a more complex read, with a focus on solving business tasks.

The lecture was given as part of the Open Week of Cognitive Experiments at St. Petersburg State University.