Until recent times, neurology specialists believed that the human brain contains approximately 100 billion neurons. It was only in 2009 that Dr. Suzana Herculano-Houzel, a researcher from Brazil, had successfully confirmed that this number was quite smaller. The actual number of neurons in the human brain is not bigger that 86 billion. The 14-billion difference is substantial – it’s the number of neurons in a baboon’s brain and half the amount of neurons in a gorilla’s.

But even these 86 billion neuron cells are not the measure of a brain’s operational power. It is measured by the number of links between the neurons, as it affects whether or not a signal will be relayed to another neuron.

“To understand how the brain works, we need to know the number of such links. Each neuron cell has 10 to 15 thousand links to other cells. If you multiply all this data, you get a million billions of links – operational units. For instance, the most advanced processing unit that is being made now only contains about 3 billion operational units.” – says Alexander Kaplan.

How do we make sense of all these millions of billions of links so that we can finally understand how our perception and memory work, what serves as a memory cell in the brain, how it encodes information and what, in fact, does it see as an encoding object?

Memory: why it’s impossible to count the terabytes in our brain

There are still many myths surrounding the subject of human memory, says Alexander Kaplan. Even now one can come upon estimates of the number of terabytes of data stored in our brains. Should we trust such claims and is it even possible to measure the memory of a human brain in familiar units?

We know perfectly well how a machine “memorizes” information. For example, all the 2,521,613 characters of “War and Peace” can be encoded through memory cells – the total amount of data would equal 2,405Mb (assuming that the novel contains 256 types of characters, one character requires 1 = log2 256 = 8 bits = 1 byte; thus the whole of “War and Peace” would take up 2 521 613 bytes = 2,405 Mb).

What serves in capacity of a memory cell in the human brain? That is still a question to be answered, says Prof. Kaplan. It could be assumed that this function is fulfilled by the synapse – a link between two neuron cells. After all, unlike a neuron, it can be in either of two states – active or inactive. But, if that were so, another issue emerges: a synapse can’t be used for precise encoding, as that would make for a memorization system that is way too slow compared to a machine. It is unclear what the brain classifies as an encoding object, too. Would letters, words or meanings classify as such objects?

“It’s likely that we’re extracting words from meanings, not letters. Try to finish this sentence in your mind. That’s why we don’t need such a rapid communication system: we get ahead of the process, while machines are unable to do that. Yet, unlike machines, which possess a clock frequency, the human synapses vary between themselves – some get delayed and others rush ahead. With this in mind, how can we possibly measure the memory capacity of our brain?” – explains Alexander Kaplan.

Scientists have only recently began to fill in all these blank spaces in our knowledge of the intricacies of the brain’s structure. A neuroscience boom has been occurring in recent years – dozens of billions of dollars are being invested into such research (there are projects of this kind in USA, Europe, China, Japan and Russia). The scientists are, first and foremost, focused on modeling the brain and studying its structure. By slicing off layers the thickness of which is measured in microns, researchers strive to gain an understanding of not just the base structures, but even the inner workings of a single neuron cell.

For instance, the renowned neurophysiologist, researcher at Howard Hughes Medical Institute and a member of the Salk Institute for Biological Studies and the University of California, San Diego, Terrence J. Sejnowski is currently studying a model reconstructed from such slices. His task is to determine the number of possible states of a synapse and whether it actually works similarly to a machine or if, on the contrary, it transmits signals at a higher or a lower rate.

“Sejnowski has managed to prove that there are 26 such gain levels in synapses. This means that not only do we have a million billions of links – synapses – in our brain, but that each of them has 26 states. Not 0 – 1 like in a machine, but 0 – 25. The potential network and memory capacity is increased manifold, because in this case encoding is not single-bit and each single cell contains 4.7 bits (log2 26 = 4.7 bits/synapse). As a result, we can easily see that this is the place where the flexibility and change in connection patterns come from that are so unattainable for machines and artificial neural networks.” – comments Alexander Kaplan while adding that the question of nature of encoding objects still remains open.

But for how much longer can we last with just the resources of the human brain?

The finite resources of our brain, or: why we suffer from more and more mental disorders.

Through the course of the last 30 years the world has transformed before our eyes – we receive information through high-speed mediastreams, the amount of responsibility for our decisions is steadily increasing – all this leads to a total shift in our biological motivations. The method of storing data has changed, too. People of the ancient times relayed information orally and their memory capacity differed from ours greatly. Afterwards we shifted to writing down our knowledge. Today, we’re moving even further and the amount of information is increasing day by day. Can our brains handle such a workload?

Colin C. Pritchard’s research suggests we need to concern ourselves with the issue of exhausting the brain’s resources as soon as possible. In his studies he analyzed the data on the number of neural disorders over 20 years (from 1990 to 2010). He concludes that over this period of time, while the rate of deaths caused by heart disease and cancers has more or less stabilized, the rate of deaths caused by neural disorders has increased greatly and the disorders themselves have become more hazardous.

Could artificial intelligence help us solve this issue?

This could certainly be the solution, concurs Prof. Kaplan.

“Indeed, if we relegate some of our tasks to a robot it would take a great load off of our brains. But first we need to figure out if there is such a thing as artificial intelligence. I believe that there is currently nothing in the world that would at least resemble an AI. Everything that we have so far is nothing but a slight, sometimes quite decent, improvement on a calculator.” – he notes.

People have been manufacturing assistants to help them solve intellectual problems for a long while. These are, for instance, Charles Babbage’s differential engine and Semen Korsakov’s homeoscope – precursors to the calculator and storage systems, designed in the 19th century. John McCarthy himself, the man who coined the term “artificial intelligence”, defined it as “the computational part of the ability to achieve goals in the world”. In other words he, too, saw such devices as assistants that could assist with computations and help make decisions, but not possess their own intelligence.

Then what about the machiens who have already beaten world champions in such intellectual games as chess or Go? These are Deep Blue that had beaten Garry Kasparov in 1997, Deep Fritz 10 that had defeated Vladimir Kramnik in 2007 and AlphaGo that beat Lee Sedol in 2015.

“So how do they win against natural intelligence without having one of their own? Easy: by cheating. In the good sense of that word. The machine’s memory holds records of all the notable games. The machine plays against a human and considers its next turn by juxtaposing it with the most successful plays in history. A human does the exact same thing in this situation, but his memory is much smaller than a machine’s. Has anyone ever been allowed to peek at their laptop during a championship? Isn’t that exactly what the machine does? It keeps “peeking” at its memory. How can one compare such opponents? And where’s the artificial intelligence here? This is just an assistant helping us to solve this or other task by instantly sorting through a myriad of options”.

What happens next?

According to sociologists, in 20 years 15 to 40 percent of the population will be replaced by automated systems. Such systems can also pose danger to humanity.

“We need to be super careful with AI. Potentially more dangerous than nukes.” – wrote in his Twitter the founder of Tesla and SpaceX Elon Musk.

In April of this year the famed entrepreneur unveiled a new company called Neuralink that will develop systems aimed at making humans more intelligent and protecting them from being enslaved by artificial intelligence. Per its founders’ design, such technology will soon be used to improve memory and remedy the effects of brain damage. According to Musk, the company plans to release a product designed to help people with severe brain injuries in four years.

Neuralink is based on the idea of uniting a computer with the human brain. Musk has already spoken to more than a thousand experts from fields varying from neurosurgery to microelectronics and assembled a core team that he himself will lead as CEO. The aim of Neuralink is development of a “neural lace” technology that will allow tiny electrodes to be implanted in the human brain. This will supposedly eliminate the need for a “middleman” in the form of a keyboard, mouse or touchscreen, which will be replaced by a brain-machine interface.

This basically means that Musk’s team will be working on a fundamentally new method of communication. Speaking to Wait But Why, Musk explains his intentions:

“There are a bunch of concepts in your head that then your brain has to try to compress into this incredibly low data rate called speech or typing. If you have two brain interfaces, you could actually do an uncompressed direct conceptual communication with another person.”

Facebook, too, is working on a similar project. Musk’s Neuralink reveal coincided with Facebook’s announcement of a brain-machine interface project. Regina Dugan, who has previously worked with Google and DARPA, and is now head of the secretive research lab Building 8, took to stage at the company’s annual F8 developer conference on April 20.

According to Dugan, Facebook aims to develop in two years a system that would allow users to type 100 words per minute using a neural interface. Developers want to translate into text the impulses sent by the brain to its speech center when a formed thought needs to be vocalized. This system will be most vital to people suffering from speech disorders. Later on, it might change the nature of interaction between people and computers.

As Alexander Kaplan notes, such an electronic “add-on” will not make people into cyborgs, as that entails a significant alteration of their body and mind, but into a sort of “cybersymbs” – a symbiosis of human and an electronic assistant in possession of much higher computational power.

Just how plausible is this?

“There are a few problems faced by creators of such interfaces: how exactly do we connect to the brain? With all the complexity of the brain, how can this “add-on” understand its desires and motives? They could connect to a single cell, but then what would one do with these discharges? A thousand-electrode connection unit would receive data from two thousand neurons. But that’s just two thousand out of hundreds of millions in just that one section of the brain. That would be a very narrow connection.” – notes the researcher.

Scientific laboratories have offered a few solutions to this in recent years. The Neural Dust project being developed at UC Berkeley has in its basis the idea of compact sensors the size of 20-30 microns – about the size of a neuron cell. Due to its miniscule size, they can be literally sprayed into the brain and then numbered using magnetic resonance mapping, explains Prof. Kaplan. Sure, one can “pollinate” the brain with these sensors, but how does one collect and interpret the data? All these issues have not been solved yet, he adds.

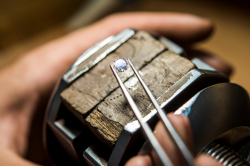

In the meantime, Elon Musk has proposed a different connection type, based on a 2015 study (Syringe-injected electronics). This method entails the use of a super-thin wire 6 microns in diameter made of carbon material. Such wire, the authors propose, could be used to weave a net with electric potential sensors in its nodes. The resulting “web” is incredibly thin and can be used to cover a microobject and sense the electric potential on its full area. In addition, the researchers propose mixing this net with a gel so that it can be applied to practically any surface.

“An example: the study poses a scenario in which the gel is injected into a mouse’s brain through a syringe. The net then “grows” into the brain and settles inside it, sensing the electric potentials from all over. If this net can be made to interact with neurons and injected into the mouse’s brain at its embryonal stage, it will indeed live on as part of the neural network. That’s Elon Musk’s line of thought, too. He gave this net a name – “neural lace”” – says Alexander Kaplan.

But Musk will also need to solve at least two other issues. First of all, he’ll need to implant the “lace” and ensure that it works. Second of all, he will need a firm connection: high-speed, broadband, multicommand and quick.

“There is no theoretical approach for this yet. It’s quite possible, that Mark Zuckerberg will have a much quicker time getting there, as he already has experts working in this area” – concludes Alexander Kaplan.