Ivan Burtsev, Master’s student of the High-Performance Computing Department, gave a speech at an international Russian-German Science Slam, which took place in St. Petersburg last weekend. The young expert explained what artificial intelligence is and how machines are going to perceive new things.

Artificial intelligence (AI) is not the same as it is shown in movies. In fact, it is a set of methods and algorithms or a software, which can solve tasks without using algorithms created by a human and also which is able to be aware of itself. Those who work with AI aim to solve two main problems. The first one is to understand how the human brain works and the second one is to create an "assistant" that would be able to do something better and faster than a human. Robots learn new things visually, as tactile and audio information are not enough.

The first problem solved by using computer vision is to classify objects. For instance, to determine what is on a picture, a cat or a dog. The next problem is to determine an object’s location. It is solved by using localization method. The third important task is verification, which is about comparing objects.

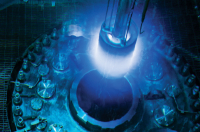

A computer "understands" a picture as a matrix consisting of symbols or numbers with a specific color (in this case it performed by RGB model) or monochrome (here one detects its contrast parameters). To let a computer understand images scientists gave the matrix to neural networks.

Neural networks imitate a human brain and consist of separate parts that are structured as layers and that are connected by synapses. The images were presented to the neural network as a vector. First of all it analyzes the first pixel from the first line, then first pixel from the second line. However this experiment failed because the system couldn’t determine what was on the picture. It was not a surprise because this method is like reading a book as follows: reading the first letter of the first word then the first letter from the second line and so forth. It makes it challenging to understand what was written on a page in this way.

Image processing is also conducted by using feature engineering, which is a process of looking for peculiarities of images not automatically. For instance, detecting cat’s features as ears and whiskers and then manual analyzing parts of images using these peculiarities. This approach was more effective than transforming images into vectors but it wasn’t very effective.

In the 1980s Kunihiko Fukushima created a convolutional neural network model. Its first successful implementation was made by Yann LeCun in 2001. It was a high precision neural network named LeNet-5. It analyzed handwritten numbers and letters on bills and account forms.

Unlike a typical neural network a convolutional one saves the information about image peculiarities. Thanks to lots of filters a system looks for straight, skew and bent lines and builds a feature map using them. Then it makes the image smaller. Pooling layers analyze image parts, choose a section, which resembles the required model and then transfers it into the next layer. Image processing involves the following steps: the system "scans" an image, creates its feature map, makes the image smaller again, scans it and so forth. Finally the image is transformed into a vector but it differs from the vector used in the approach mentioned before. The vector used in convolutional network saves geometric parameters of the image.

In 2012 the convolutional network revolution changed everything. Alex Krizhevsky and his research team created a network, which included eight convolutional layers and two fully connected neural layers. It was much more effective than other networks. It contributed a lot to computer vision field and machine learning fields. Several years ago the system couldn’t show a better result than a human with his 5.33% mistake rate. However in 2015 Microsoft presented a deep convolutional neural network which beat a human. It means that a computer is versed in image analyzing more than people.

It seems that the more layers a network has the more effective it is. But it is not true. Currently researchers have made progress. Instead of adding more and more layers they add neural networks.

Human brains have from 80 to 130 billion neurons connected by 10−13 trillion synapses. All the neurons are not interconnected, each neuron has from 10 to several thousand connections. Obviously, a brain is much more effective, it also consumes less energy but has more parameters.

One may believe that AI and neural networks will not surpass a human brain in the nearest future but it is not true. Modern AI is at the same level as insects, as it is weak now but it is growing exponentially. According to researchers, AI will become more powerful than human intellect in 2020−2045. Then a great revolution will start because AI will be able to develop without human assistance. Nobody can even imagine the results of this.

As of now AI is used for assistance purposes. For example, quadcopters are taught how to detect wood trails while flying above a forest. Now it does exactly what a human orders, so it cannot disobey.

However AI can learn. For instance, there is a system, which prepares a latte by itself. It was shown how a coffee machine works then it made an algorithm and managed to make a cup of coffee despite the fact that it had never seen how to do this before.

One of the best examples of machine learning systems is Google’s driverless cars. They are equipped with deep learning and computer vision systems. They have special detectors at the top. Using lasers they observe the environment, analyze it and create a map.

Then it sends the received information to the computer so as to let a human look at this. Bikes are labeled with a red color, cars are colored with pink and green, pedestrians are yellow. The smart car develops many algorithms, as it has its AI which is programmed to make decisions depending on a situation. This system is based on deep neural networks. A computer detects a bike, understands that a biker raises his hand and wants to turn. Then the car slows down to let him do it. Google’s main priority is safety. That is why if somebody wants to overtake the car it will not resist, so as to avoid an accident.