The main focus of the lecture was on the latest solutions in the field of forecast modeling of sensor data with a supervised AI learning to stream sensor data within energy and railway infrastructures. The researcher also outlined the problems that modern technologies have to solve on our way to smart energy supply, logistics and smart cities. He also presented the results of introducing demand forecasting into real-life economic sector scenarios in Europe and the recent advances in this field.

Big Data has been employed in renewable energy production for several years now. Solar and wind power generators alike have always been collecting data. Thanks to Big Data, machine learning and forecast analytics, all of this data can now be correlated with satellite readings and meteorological forecasts. This development allows companies to work more efficiently knowing what to expect in certain weather conditions.

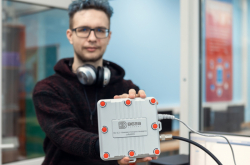

Machine learning and Big Data, however, can also be used in conventional energy production. A sensor which models forecasts in renewable energy systems can also be used for dynamic load management.

What comprises a well-developed measuring infrastructure? It has to include measuring sensors and new technologies; it has to be compatible with AMI (advanced metering infrastructure), which is responsible for comprehensive IT solutions to energy-connected issues, while also allowing peak system load control and having an open software architecture, the latter ensuring fast integration with already existing systems and business processes management. The next step is using a CIM model, which includes the more significant facilities of an electric power plant, which in turn are usually included into an EMS (Energy Management System). The model is applicable for creating integrated applications in heterogeneous computing systems. And the last step would be data from a streaming sensor (IoT).

To efficiently forecast utilities load, the sensor needs to access heterogeneous information sources: sensor data, forecast data and statistical data. The steps of the online forecasting are the following: the information is first collected by QMiner, a large-scale stream platform, and Scikit-learn, a machine learning database; then, the information is processed using JavaScript and Python APIs.

When all of these processes are coordinated, energy cycle operations can be managed more effectively using data from every source, including the hydrometeorological and time forecasts.

A railways modeling sensor requires the following information: the on-board data, the electric sub-station data, weather conditions and geolocation. Compiling this information enables the sensor to create realistic forecasts that can affect the work of railways.

Naturally, both of these sectors produce loads of data every day. To process all of this information, the sensors have to be adapted to work with Apache Kafka, a platform providing the space needed to store large blocks of data. It is effectively a message bus with high throughput that can process all of the data as it goes through it.

Active use of forecast modeling of sensor data with supervised AI learning to stream data will allow plants to employ virtualization. It means that they will be able to create virtual power plans, manage demand, interest rates and loads as well as their investment portfolios.

Sensors are a high-tech solution, which improves the performance of railways and utility services meaning a better quality of life for those using these services. Thus, this Big Data and machine learning based smart complex will bring us one step closer to smart energy and smart cities.