What is easy and what's hard to automate: can robots operate outside plants and factories?

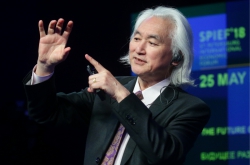

The media and the general public usually imagine robots as some cute antropomorphic helpers. In reality, the robots who really do useful work look completely different. They look more like some mess of machine parts fixed to an assembly line at some plant; indeed, more than 50% of robots that do useful work are engaged in car manufacturing. Those are large and powerful mechanisms that are safe for people only because they are placed inside restricted areas. This is the situation we all aspire to change: we want robots to become part of our environment. Yet, how can we do that?

We don't use robots outside factories not because we don't need that. The reason is that we still can't really use them anywhere else. Why? Let's say a car manufacturing company buys a robot for $30,000. To use it properly, it has to invest half a million more - that would be spent on creating all the conditions necessary for it to work. The parts it will work with are to be delivered by a conveyor system; it will also need sensors, cameras and other devices. Thus, half a million dollars are to be spent to compensate for the fact that the robot can't think as a human and feel what's happening around him.

Surely, the invention of industrial robots is robotics’ colossal success. This industry's total volume is 40 billion dollars a year. Some forty years ago, cars were painted by people in harsh conditions of an assembly line. Now, there's no plant in the world that uses human labor for that. Yet, these robots' method of operation still has to do with structured tasks only, where there's no uncertainty, and everything can be programmed beforehand; access to such robots is restricted, so as to exclude any additional factors.

Industrial robot. Credit: joie-de-vivre.ru

Industrial robot. Credit: joie-de-vivre.ru

Can we create such conditions on the street, or at one's apartment? No, as there's too much uncertainty. Researchers understood that even when robotics only came to be. Since the very beginning, there were "easy" robotic tasks (those that could be accomplished using the existing methods and technologies, like the industrial robots used in car manufacturing), and "hard" tasks which had to do with uncertainty - like making robots do seemingly simple things like delivering newspapers, help the elderly and the like.

From household chores to navigating on Mars: why is it that robots are so slow when outside of plants?

Let's imagine that a robot has a task to bring some granny a glass of orange juice. What's so difficult about that? Surely, we can now build a robot that can pour juice into a glass. That's not the hard part. The hard part is to make it so that the robot won't break the glass or harm the granny while he's performing his duty. In other words, it is in making him understand and feel his surroundings. That's ironic: the function itself is easy, the problem is in the surroundings. Making a robot that can deliver newspapers and put them into the mailbox is of no problem; the hard part is to make it so that it won't fall into some pit or kill someone while he's at it.

Another good example is NASA's Mars rover. When it landed, the media proudly announced that it's alright, and in six months, it will cross a plane of a size of about a football field. Let's calculate: six months to pass a distance of some 200 meters? Why does it have to move slower than a turtle?

The robot is controlled by an operator from Houston, and the operator does everything slowly and carefully. Just imagine how it goes: first, a command is sent to the robot to move by 2 cm. It takes eight minutes for it to reach Mars, where the robot receives it, processes it, moves by 2 cm and sends a message that he did it. Then he requests the next command. So it's no wonder than moving by 200 meters takes him six months. Yet, why does it have to be like that? It's all because the robot can't think really well. We can't actually blame it - he has very limited information of its surroundings.

NASA's Mars rover

NASA's Mars rover

This is the reason robots cannot solve many other tasks, including doing household chores. Most of us would've liked to have a smart vacuum cleaner that would do the clean-up while we're outside. Well, there is the Roomba robot. At first, its developers called it a robotic vacuum cleaner, but then they had to rename it into vacuum-sweeper, as it could only clear up dust but not clean floors or carpets. It lacks the power to do that. Yet, if we increase the power and leave it all by itself, we can't be sure about the consequences.

As of today, everything that's done in unstructured environments is controlled by an operator. This makes the process slow, and can't provide for the necessary quality.

So, how can we make such systems work?

First of all, new sensors are necessary. The robot has to feel its surroundings with every part of its body. It's like in nature: even if there were once creatures that didn't have such sensors, they've long disappeared due to evolutionary processes.

Vladimir Lumelsky's lecture

Vladimir Lumelsky's lecture

The term now used is whole-body sensing - there are to be sensors over the robot's whole body, much like human skin. In fact, our skin is our biggest and heaviest organ. We can live without eyesight, hearing, the sense of smell - but we can't survive without the sense of touch.

Everything that has to do with our skin is most important. There is a similar idea in robotics as well - the "sensing skin", the artificial skin that can "feel". Developing such skin is an important part of our laboratory's research; we conduct a project on that in collaboration with Hitachi. The sense of touch in such systems is not as important as it is for humans - as of now, engineers can replace it with something better. For instance, bats can feel at distance, so we can use something similar for our artificial skin - for instance, by using infrared sensors.

The next thing we'll need is algorithms to work with data from such sensors. That's a separate field of research - it has a lot to do with mathematics, programming and Computer Science. If we accomplish both, we would be able to create robots that can act much quicker and "smarter" than any operator.

Vladimir Lumelsky's lecture

Vladimir Lumelsky's lecture

What are the tasks that are to be solved for such robots to become a reality?

Well, first of all we need the sensors. And, sadly, that is a great problem. There are some that have already been developed, but they won't do for the above-mentioned purposes.

As for now, artificial skin is something we can have at a university laboratory, something we can demostrate, but it's far from being completed and ready to be used by a consumer. Different science centers all around the world are making attempts in this field. There have been many new inventions during the past 20 years, but sensors still progress really slowly. In electronics, progress mostly means miniaturization - like making smaller chips with more elements in their structure. Yet here we need something different.

Artificial skin embedded with miniscule sensors is to be produced in large amounts, printed like a newspaper. It is like macroelectronics with a microlevel in it. The more sensors there will be on the skin's surface, the better the robot will move. Yet, we still aren't there. Researchers at a laboratory in Glasgow work on this problem, but there is still no type of sensor that can be produced on large surfaces. Another idea is to weave such surfaces and then put it on the robot like some stocking. There were similar attempts in Japan. Yet another idea is to print the sensors on a robot's body.

Vladimir Lumelsky's lecture

Vladimir Lumelsky's lecture

For sensors, we'll need corresponding programs and algorithms, and strategies for using them. Still, I believe that creating them is easier that making the sensors themselves. That's quite unusual, as it mostly happens the other way round - creating hardware is usually easier than solving intellectual tasks.

After all that is done, we'll have to work out other details. For instance, if our robot moves on wheels, the task is easier, as we work with two-dimensional space. If that's a manipulator that moves in three-dimensional space, the task becomes harder.

Another thing is that though robots will continue to be created for particular tasks, there still might be the effect we've seen with laptops. The laptop you buy can do a lot more tasks that you actually need it for. So, why did they not sell you a device that does only what you need to? It is because it turned out that it is more profitable to make it multipurpose, so that anyone can use it for whatever purpose they need. Actually, it's the same with humans if we look at it from a particular point of view. The same person can work on a factory or in circus. If something similar is to happen with robots - that would be just a technological and commercial solution.

How soon can that happen?

It's hard to tell. 25 years ago, I thought it will happen soon, but we still haven't made much progress. Yet, we can't exclude the possibility of some breakthrough.

According to some forecasts, by 2030, about 40% of professions will be done by robots. What do you think of it?

20-30 years back we thought that the areas that do not require much intellectual labor will be the first ones to become automatized. But we were wrong. As of now, the USA trains 30% lawyers less than it did 10 years ago. And training for a lawyer takes time and effort. It turned out that it is much easier to automatize a lawyer's work than to automatize the digging of ditches.

Whole-body sensinf concept. Credit: frontiersin.org

Whole-body sensinf concept. Credit: frontiersin.org

Yet, in future, more and more human workers will be replaced by robots. I see nothing that can impede the process. The fundamental tasks of robotics can be solved gradually. Robots with simpler sensors can solve simpler tasks. For instance, for flying robots navigation is much easier, as they have less chance of colliding with objects than those who move on the ground. This means that they may well progress faster.

Another thing we shouldn't forget about is the social side of the problem. There are different presumptions; for instance, some think that in future, people will be paid so they won't go to work. That might once become reality.

Vladimir Lumelsky graduated from LITMO's (ITMO's former name) Department of Computation Technologies in 1962, and at the current time is the university's only graduate to hold the title of Life Fellow af the Institute of Electrical and Electronics Engineers (IEEE). At different times, Mr. Lumelsky worked for such companies as Ford and General Electric. From 2004 till 2012, the professor worked at NASA's Goddard Space Flight Center, where he was head of a laboratory researching space robotics; he also was head of the robotics program for National Science Foundation, USA.

Mr. Lumelsky worked in the Antarctic and the South Pole. He is also Professor Emeritus at the University of Wisconsin and founder-editor of the IEEE Sensors Journal.